Want to learn more?

Contact a data center team member today!

This Iron Mountain Data Centers insight paper sets out the new development, design and construction approaches we have adopted to address the evolving needs of accelerated computing.

The data center industry has pivoted to focus on the requirements of Machine Learning and Artificial Intelligence, building larger, clustered, more densely configured facilities and campuses. But design requirements vary considerably depending on specific AI applications and location, and every customer has a slightly different set of specifications.

This Iron Mountain Data Centers insight paper sets out the new development, design and construction approaches we have adopted to address the evolving needs of accelerated computing.

Our data center business is one of the fastest-growing operators in the global colo, cloud and AI space, but it is also committed to increasing its leadership in security, sustainability and customer focus.

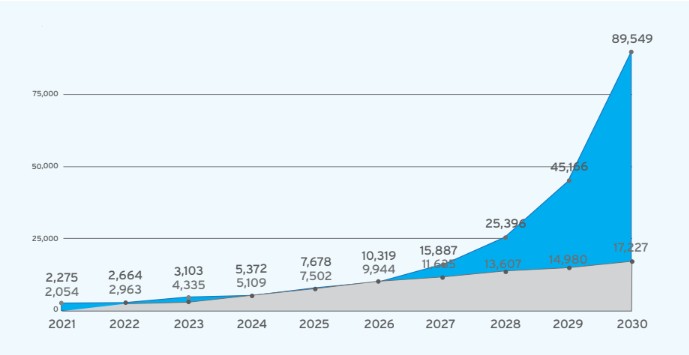

About 20% of total data center capacity is already being used for AI, and Cushman & Wakefield estimates that AI will drive $75 billion in data center demand by 2028, pushing this share up to 35% of the total market.

This puts greater pressure than ever before on data center businesses to build more and bigger AI-ready facilities. According to McKinsey “to avoid a deficit, at least twice the data center capacity built since 2000 would have to be built in less than a quarter of the time."

Hyperscalers are the biggest customers, now accounting for as much of the global colocation market as Enterprise IT, and consistently increasing their investment. But there are many others. In addition to growing enterprise and cloud demand, the market is being accelerated by neoclouds, a new generation of specialized cloud computing providers looking for purpose-built AI, ML and HPC facilities. Neoclouds are forecast to expand and, increasingly, dominate the managed infrastructure segment over the next few years.

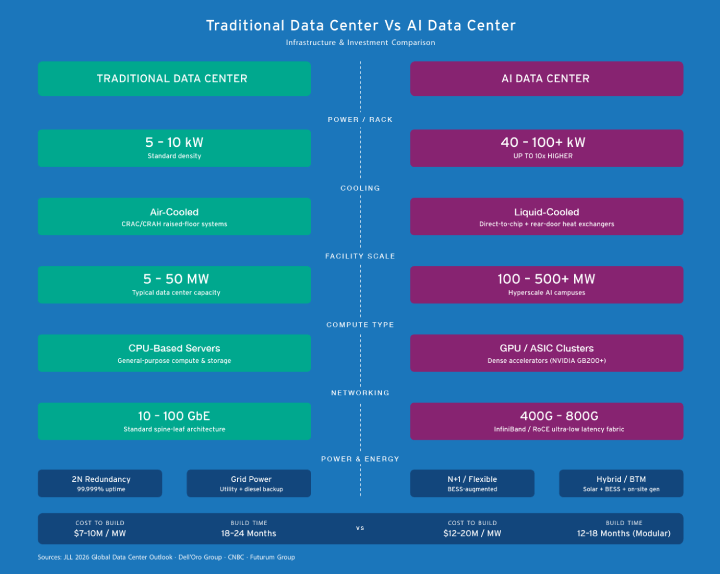

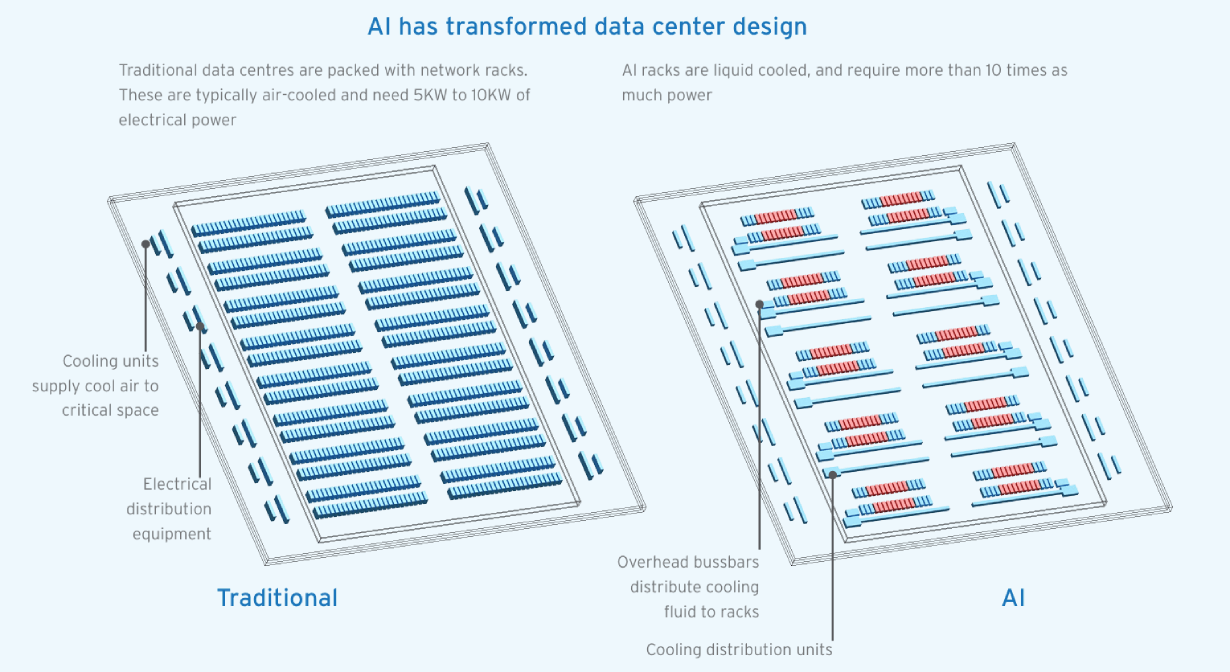

Data centers have traditionally been designed around CPUs, but AI data centers are built around GPUs, which use exponentially greater power, and therefore new types of power distribution and cooling. Often, the sheer number of GPUs necessary for AI workloads also requires far more square footage. The most famous types of AI facilities are vast AI training factories, but the sort of infrastructure that AI needs actually comes in all shapes and sizes.

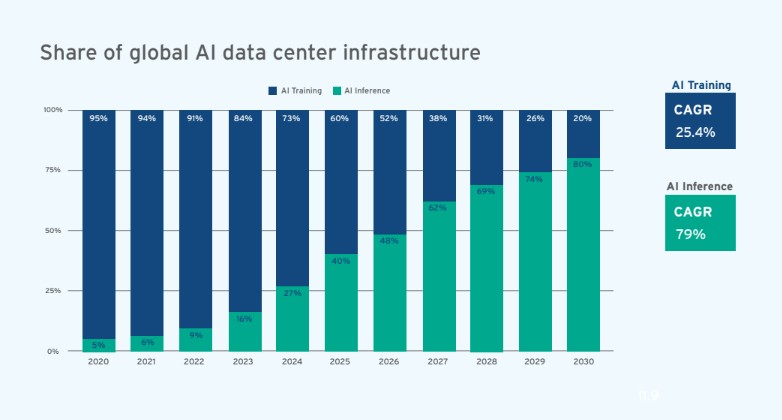

AI infrastructure requirements are in transition just now. The era of Large Language Model (LLM) training is stabilising and an era of production, aka inference, is beginning. In market terms, this is a shift from investment by hyperscalers and neoclouds to growing returns and revenue.

Every AI app (ChatGPT, Gemini etc) is referred to as an ‘’inference engine,’’ and every chatbot query, AIgenerated translation or document, as well as every automated decision generated by upcoming AI apps (say, self-driving) is an inference event. Over the coming year or two, AI inference infrastructure will overtake AI training infrastructure. Why is this? Because inference is a recurring need which builds, rather than a one-off training run, it will quickly become the dominant form of AI infrastructure and the greatest consumer of power and space.

This has huge implications for infrastructure design and geographic distribution. It is also important to bear in mind that the infrastructure investment currently underway is long-term, looking beyond LLMs to other, equally exciting, AI applications, many of which will have altogether new design requirements.

AI Inference is forecast to grow at a 79% CAGR to 2030, as compared to 25% CAGR for AI Training. This has huge implications for the extent and nature of AI infrastructure.

Demand for training data centers will continue to grow fast. Medium-sized inference facilities will be much more numerous and location-sensitive. To support enterprise AI growth, which is forecast to run at 84.9% over the next five years (Structure Research), AI facilities need to be integrated into existing cloud regional architecture, with latency-sensitive inference workloads embedded next to existing cloud on-ramps.

For colocation providers with a hyperscale focus, this growing AI-driven demand represents a major opportunity, and by far the biggest share of this will be by addressing customer needs for inference. Over the next two years, the majority of AI compute will be required to run rather than build models, and this requires greater build speed, densely populated locations, more diverse bandwidth, and denser interconnection, all of which are traditional colocation strengths.

The question is, what is the best way for data center operators to address these evolving customer needs at sufficient scale?

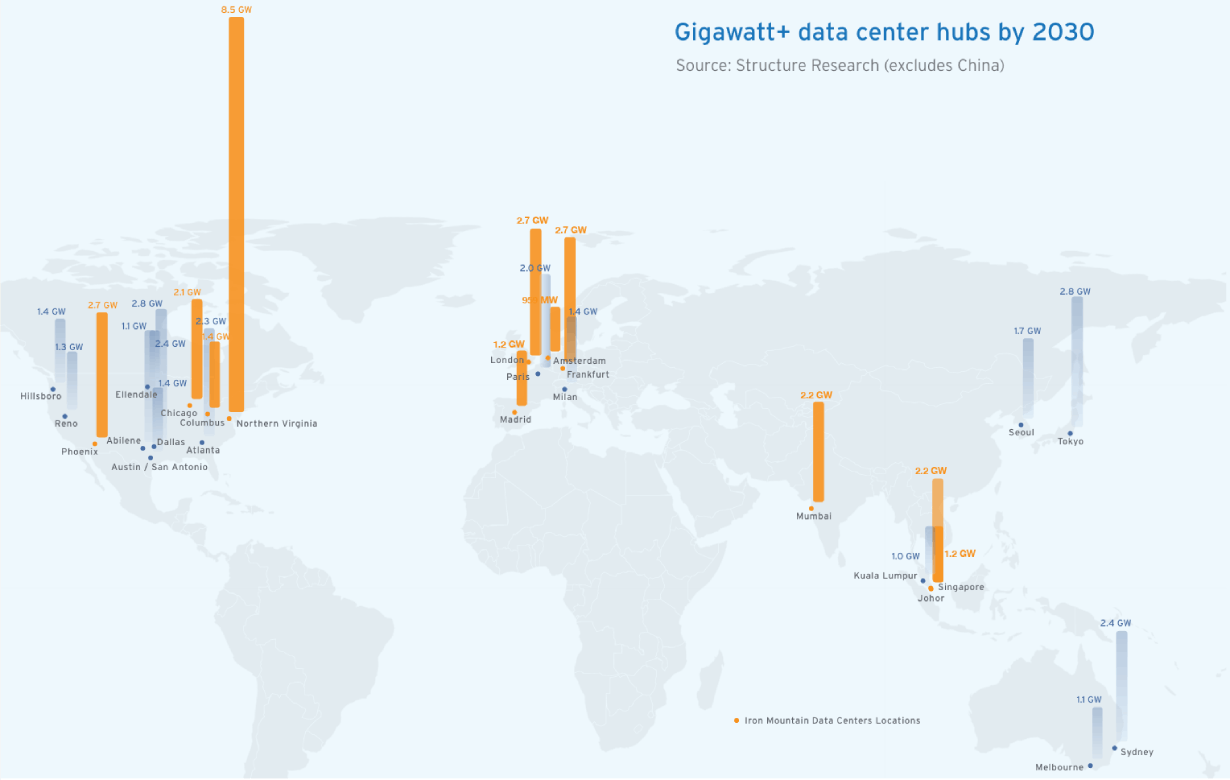

In North America, the largest global hub will continue to be Northern Virginia, more than doubling in size to 8.5 GW, followed closely by Dallas (2.8 GW), Phoenix (2.7 GW) and the new hub in Abilene (2.4). In Europe, London, Frankfurt, and Paris (all over 2 GW) will lead demand with major new hubs in Milan and Madrid. The largest hubs in Asia Pacific will be Tokyo (2.8 GW) and Sydney (2.4 GW), and the fastest growers will be Johor and Mumbai, with over 2 GW.

According to this research, total data center capacity in the 25 metros marked here is forecast to grow by 162% in five years, from 21.53 GW (2025) to 56.45 GW (2030). Some estimates forecast even faster growth.

These medium-sized facilities are still orders of magnitude larger than traditional data centers. Meeting AI-driven demand will require a huge, concerted, and global investment effort in existing and (primarily) new facilities from the data center industry.

The amount of capital being invested by hyperscalers and neoclouds in advanced chips and accelerated infrastructure is huge. Because of the potential economic and geopolitical impact of AI, many of these investments are at such a scale that they become headline-making national infrastructure announcements.

A new sub-segment of “shovel-ready” developers of major AI sites has also recently emerged to support and accelerate these large developments, taking care of zoning and planning, site purchase, and power and permitting.

While data center operators may not be able to attract the same level of capital as the world´s largest businesses, they must step up to the plate. IMDC, for instance, has committed to doubling its development pipeline from our current level of 1.3 GW to 2.6 GW by 2030.

The precise topology of AI is not yet fixed, and it will continue to evolve as new services are launched, but the vast majority of global infrastructure demand is occurring in a mix of Tier 1 and fast-growing Tier 2 hubs.

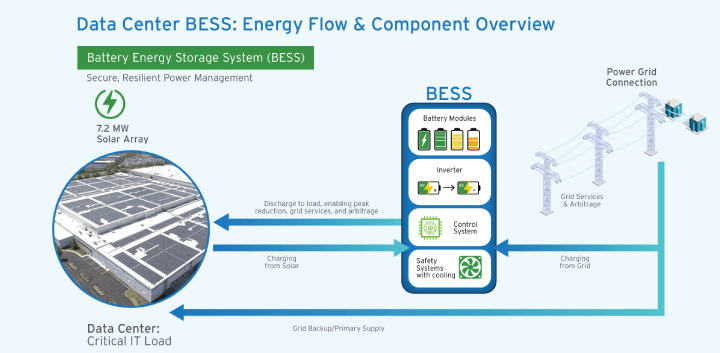

The primary consideration for expansion in and around these hubs is now power availability. Grid constraints are delaying and in some cases halting power interconnection, particularly in mature markets. “Pay to play” stipulations from some grids are driving prices up and diverting capital from active projects. To address this power shortage, a growing array of Behind the Meter (BtM) energy generation solutions is emerging, from natural gas microgrids to nuclear reactors, from battery storage to DIY renewables.

A proactive, expert, and well-connected site selection function is the key to successful expansion. In consultation with local market experts, suppliers and authorities, this team can build a dynamic power and land bank. New builds get the green light once business cases or, ideally, anchor tenants are in place.

North America continues to be the busiest market for AI investment and Virginia will continue to be the world´s largest data center market. To keep in touch with demand, IMDC is building 150 MW of additional capacity in Manassas, Northern Virginia, as well as a major new campus in Richmond, Virginia, with 1 million square feet of space and over 200 MW of capacity.

Madrid is one of the fastest-growing emerging hubs in Europe with capacity forecast to increase from 228 MW in 2025 to 1.18 GW by 2030 (Structure Research). Spain´s Minister for Digital Transformation recently announced that IMDC´s 79 MW Madrid campus, scheduled for completion in 2027, had been proposed as a site for an EU-funded AI “gigafactory” project.

Operators are no longer looking at single data centers, they are focusing on campuses, and these are frequently very large. For a long time, the standard data center design was in the 40-50 MW range. AI specifications, particularly on the training side, are much larger, with campuses well over 1GW of power at design. New BtM power solutions from microgrids to battery storage or linked renewables can also require very large amounts of land.

Inside the data center, GPUs change the way space is used. A flexible air-cooled traditional data hall would support 12 MW, whereas the same size of hall can support 32 MW with liquid cooling. This space saving is generally offset by the additional infrastructure required for power and heat rejection, which take up around 70% of overall space. However, this ratio will change once more with new running temperatures, power and cooling solutions.

Within the data hall, GPUs require around 10 times more power density than CPUs, and rising. According to epoch.AI, while compute power is doubling roughly every 5 months, power consumption is doubling every 1.4 years. This power intensity has a rolling impact on design. Most of IMDC´s AI-ready facilities are currently running at medium-level densities of 12-50 kW/rack, but these densities are increasing, and 200 kW racks are now being piloted in Virginia by IMDC.

To enable these densities, power stream components need to scale up and adapt. New busway systems – open-channel, overhead plug-in power distribution – enable higher capacity, distributed a nd redundant supply, and can be adjusted or scaled without interrupting operations.

UPS controls also need to be upgraded to manage AI power surges. For instance, an NVIDIA GB200 cabinet has a Thermal Design Point of 132kW, which means that the Electrical Design Point must be tolerant of throttling up to 1.5 times this amount during short duration demand peaks. Suppliers are now refining their UPS controls to accommodate these surges near to source, avoiding any impact on generators or the grid. Battery Energy Storage Systems (BESS) can also help deal with this.

For a long time, 2N power redundancy was a fixed element in data center design. This is no longer the case. For dedicated AI data centers, less redundancy can reduce space and equipment costs, allowing the training or inference process (the “workhorse” elements) to either stop and start without negative impact (training) or reroute (non-time-critical inference). All while protecting the continuity of the “networked brain” of the operation with 99.999% uptime.

This too will change. When nuclear-powered data centers come online in a few years this will affect configurations even more, and backup generators will disappear altogether. Currently the power and cooling layout is about 2 to 3 times as big as the actual operational requirement, this would make for a much more efficient one to one ratio.

The incorporation of liquid and air cooling to support high density AI arrays is the biggest single design change. Liquid is transformative, as it can pull 3000 times more heat out than air can. The evolution of GPUs is already driving a rapid progression in cooling layout and technology, and this pace of change will continue.

Active Rear Door Heat Exchangers (50kW-70kW) are becoming much more widespread for medium-intensity AI workloads including the H100 GPUs, which typically operate at 40kW per rack. Also known as “Air Assisted Liquid Cooling,” this solution can handle higher power densities than traditional air cooling methods like legacy air cooling (up to 8kW per rack) or the latest forms of air cooling, which can manage up to 30kW per rack.

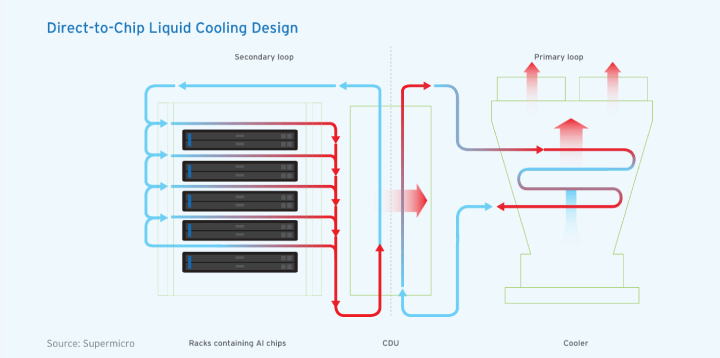

A step up from this is Direct-to-Chip Liquid Cooling, which can support power densities of between 50kW and 200kW. This is suitable for GPUs like the current generation of GB200s, which run at around 120kW per rack.

The most advanced form is Liquid Immersion Cooling, capable of handling 250kW to 1MW per rack.

The liquid switch is far from completed. Currently, even the densest water-cooled AI or ML loads still require traditional air-cooled equipment. Specifications are also still in flux, with different chip manufacturers and server assemblies using different ways to get liquid to the cold plate. Many customers have air to liquid mixes that need to change after project kick-off to take account of new densities and temperatures.

In the rack area, coolant fluid circulates immediately above the chip surface via cold plates, which absorb their heat. The central section of the diagram shows heat being exchanged out of the fluid in a coolant distribution unit (CDU). In the Primary Loop, the heat is then transferred to the outside air via a cooler.

Building bigger campuses with new designs and components creates challenges in a supply-constrained market. Achieving on-time project delivery across a global platform needs strong and diverse supplier partnerships, pragmatic new relationships with regulators, planners and power providers, and crystal clear customer communication.

In the past, hyperscalers have taken two to four years to build their own data centers, while colocation providers are typically able to develop a data center in less than two years. Colo providers have existing campuses that are densely connected in or near key hubs, and their site selection and supply chain specialisms, combined with local regulatory knowhow and design and construction expertise can accelerate speed to market.

Big builds require a big and experienced team. Specialization across all key functions enables quicker decision and global scale. These include energy procurement and partnership, site selection, design, construction, sourcing, mechanical and electrical project management, and solution engineering.

To minimise delivery risk and run multiple largescale projects in parallel, supply chains need to grow constantly, a process driven by the sourcing lead. Booking equipment well ahead of project green lights is critical to minimise lead-times: if specifications change, most components can easily be redeployed elsewhere.

Scale also helps. Operating a global platform can also be extremely useful for filling gaps, for instance tapping into or seconding talent across continents, or reducing delays by sourcing components for an American project from a European supplier.

In each global region a preferred architect and project manager needs to be supplemented with a long list of alternative subcontractors. There is no one-size-fits-all solution. Sometimes projects are managed through a single prime contractor, and sometimes contractors from civil engineers and security vendors to MEP providers need to be managed in-house.

New suppliers are constantly being tested out on smaller projects and success means promotion to larger builds. The quality control process is two-way. Vendor input is critical in such a fast-changing market, strengthening the project owner´s ability to respond to customer enquiries quickly and accurately.

At the rack level, AI standards are still fluid. Implementations are already a lot more standardized than they were a year ago, for instance in terms of the number of power shells, hot or cold aisle size, piping standards, and CDU standards. NVIDIA in particular are taking the lead here, and operators need to keep in touch with the latest developments, for instance by attending their GTC conferences.

At the campus and data hall level, modular, reference-based designs are critical. They allow for mid construction updates to layouts, pre-integrated cooling, and adaptable power infrastructure. By using pre-assembled, standardized components, operators can shorten timelines without compromising reliability or performance.

IMDC runs two standards globally, validated against energy-efficiency and operational performance criteria. Both offer air-cooled data centers provisioned to support liquid cooling. All of IMDC´s latest generation of facilities match these standards, with minimal variation across different global regions. They are a blueprint for integrating advanced computing hardware, sophisticated cooling, and high-density power systems, optimizing the overall data center footprint.

Innovation speeds delivery. IMDC is pioneering a Battery Energy Storage System (BESS) for its NJE-1 New Jersey facility. The 12 MW/23 MWh BESS is capable of supporting the entire facility load for hours if needed, responding to real-time grid conditions and reducing consumption during peak periods. A recent Google-funded study found that data centers that contribute to grid stability move up the connection queue. You can find out more about this and other approaches to supporting the grid transition in the IMDC ebook “Flexible Data Center Power” ebook.

Adopting a global green building commitment over the wide geographic spread of IMDC´s expansion pipeline is a challenge. The benefits, however, are clear in that it helps to align our design standards globally and provides our customers with consistent, universally applicable, metrics.

Like North America´s LEED, but with a wider global user base, BREEAM is a global construction standard based on performance across a wide range of categories. It assesses every aspect of the data center build from planning, consultation and communication with the local community to land water and materials use, energy, ecology, innovation, annual operational metrics, transport and recycling. BREEAM is now the IMDC standard for almost all new builds worldwide, and in markets where BREEAM is not available we align with the standard as closely as possible.

Currently, the following IMDC facilities are either accredited to BREEAM or in process:

In 2022 IMDC was the first data center provider in North America to earn the BREEAM (Building Research Establishment’s Environmental Assessment Method) design certification for its Phoenix AZP-2 data center. The BREEAM standard is now applied to new IMDC facilities worldwide.

Customer service In the face of climate change, one of the biggest threats to large-scale AI expansion is abandoning sustainability standards, thereby undermining the industry´s right to operate. As Microsoft CEO Satya Nadella expressed it, AI service providers need to provide equivalent value to people, or “they will quickly lose even the social permission to actually take something like energy, which is a scarce resource, and use it to generate these tokens.”

IMDC has offset 100% of its customer power with renewable VPPAs since 2017. Over and above this, the company´s 2022 commitment to 100% clean power tracked hour by hour by 2040 is still unique among global colocation providers. 75% of IMDC customer power is now tracked hourly and energy procurement is increasingly aligned with lowering carbon levels via local and on-site renewable sources.

This is the future of power reporting. The form of granular “space and time” tracking IMDC follows will soon be obligatory under the new Greenhouse Gas Protocol. While it may cause challenges for some operators in the short term, this method of ensuring that all clean energy claimed is local and simultaneous with use will be a key lever for reducing the real carbon footprint of accelerated data center infrastructure.

IMDC facility design has been developed to align with the Climate-Neutral Data Center Pact targets for PUE and WUE. All new IMDC facilities, when operating at full capacity, achieve an annual PUE no higher than 1.3 in cool climates and 1.4 in warm climates, and at full capacity in cool climates that use potable water they will meet a maximum WUE of 0.4 L/kWh in water-stressed areas.

Pinch points, redirects and workarounds are inevitable in such a pressurized tech-driven market phase. Data center specialists need to provide reassurance that these and future challenges will be solved quickly, creatively, and sustainably.

The current era of building out accelerated infrastructure at scale will be viewed as one of the greatest achievements of this century, and the trust that collaboration and shared problem solving builds will forge the long-term relationships needed to deliver AI worldwide.

Your data is your advantage. Yet too often it remains untapped: disconnected from systems, underutilized, untrained, and exposed to risk. At Iron Mountain, we help the world´s most complex organizations unlock what´s possible with data centers that protect, connect, and activate your data like never before.

How? By combining our legacy of trusted security with advanced, cloud-agnostic data centers powered by 100% clean energy - delivering scalable, compliant, sustainable and future-ready solutions. Our global 1.3 GW portfolio empowers customers including leading cloud and AI providers to scale their data footprint, enhance security and unlock greater value.

What can we unlock together?

Contact a data center team member today!